Let’s go on talking about multithreading in the Linux kernel. Last time I told you about interrupts, the ways to process them, and tasklets. Since I’ve wanted it to be one article, I will refer to tasklets in the story about the workqueue, and assume that the reader is already familiar with them.

Just like the last time, I will try to make my story as detailed as possible.

Articles of the Series:

-

Multitasking in the Linux Kernel Interrupts and Tasklets

- Multitasking in the Linux kernel. Workqueues

Workqueue

Workqueues are more complex and heavy entities, than tasklets. I will not even try to describe here all the implementation details, but I hope to review the most important things in some detail.

Workqueues, just like tasklets, serve for the deferred processing of interrupts (but we can also use them for other purposes), but, unlike tasklets, they are run in the context of the kernel-process. Thus, they are not required to be atomic, and can use the sleep() function, as well as various tools for synchronization, etc.

First, let’s see how the process of processing a workqueue works. The picture below shows it in a very approximate and simplistic way. I’ll describe in detail how everything actually works.

Several entities are involved here.

Firstly, the work item (work, for short) is a structure that describes a function (for example, the interrupt handler) that we want to schedule. It can be treated as the analogy of the tasklet structure. During scheduling, tasklets were added to queues that were hidden from the user. Now, we should use a special queue – a workqueue.

Tasklets are handled by the scheduling function, and workqueues are processed by special threads called workers.

Workers provide the asynchronous execution of works from a workqueue. Despite the fact that they call works in turn, the sequential execution is usually out of the question, as sleeping, waiting etc. take place here.

Generally, workers are kernel threads, meaning that they are controlled by the main scheduler of the Linux kernel. But workers often interfere in scheduling for the additional organization of the concurrent execution of works. We’ll talk more about it below.

To outline the main features of the workqueue mechanism, I suggest to examine the API.

About the Queue and Its Creation

alloc_workqueue(fmt, flags, max_active, args...)fmt and args parameters are the printf format for the name and arguments to it. The max_activate parameter is responsible for the maximum number of works that can be executed concurrently from this queue on a single CPU.

We can create a queue with the following flags:

- WQ_HIGHPRI

- WQ_UNBOUND

- WQ_CPU_INTENSIVE

- WQ_FREEZABLE

- WQ_MEM_RECLAIM

Particular attention should be given to the WQ_UNBOUND flag. By the presence of this flag, queues are divided into bound and unbound ones.

In bound queues, works are bound to the current CPU during the adding process. So, in such queues works are executed on the core that schedules it. In this respect, bound queues resemble tasklets.

In unbound queues, works can be run on any core.

An important feature of the implementation of workqueues in the Linux kernel is the additional organization of concurrent execution, which is present in bound queues. You can read more about it below. As for now, I can say that it is performed in such a way as to reduce the use of memory, and, at the same time, the processor would not idle. All of this is implemented with the assumption that one work does not use too many CPU cycles.

There’s no such thing for unbound queues. In fact, these queues simply provide the context for works and run them as soon as possible.

Thus, we should use unbound queues when an intensive load on the CPU is expected, as in this case the scheduler will take care of the concurrent execution on several cores.

By analogy with tasklets, we can set the priority of execution for works. It can be either normal or high. The priority is the same for the entire queue. By default, a queue has the normal priority, and if we define the WQ_HIGHPRI flag, the priority will be high.

The WQ_CPU_INTENSIVE flag is reasonable for bound queues only. This flag is a rejection to take part in additional organization of concurrent execution. You should use this flag, when it is expected that works will spend a lot of CPU time. In this case, it is better to shift responsibility to the scheduler. Read more about it below.

WQ_FREEZABLE and WQ_MEM_RECLAIM flags are specific and go beyond the topic, so we won’t dwell on them.

Sometimes it is reasonable not to create our own queues, but use general ones.

Here are the main of them:

- system_wq – a bound queue for fast works;

- system_long_wq – a bound queue for works that are expected to run for a long time;

- system_unbound_wq – an unbound queue.

Works and Their Scheduling

Now, let’s deal with works. First, look at the initialization, declaration and preparation macros:

/* during the compile time */

DECLARE(_DELAYED)_WORK(name, void (*function)(struct work_struct *work));

/* during the runtime */

INIT(_DELAYED)_WORK(_work, _func);

/* to change the function being performed */

PREPARE(_DELAYED)_WORK(_work, _func);

The following functions help to add works to queues:

bool queue_work(struct workqueue_struct *wq, struct work_struct *work);

bool queue_delayed_work(struct workqueue_struct *wq, struct delayed_work *dwork, unsigned long delay); /* work will be added to the queue only after the delay */That’s what we should dwell on. Despite the fact that we specify the queue as a parameter, works are actually added not to workqueues as it may seem, but to absolutely another entity – to the queue-list of the worker_pool structure. In fact, the worker_pool structure is the most important entity in the organization of the workqueue mechanism, although it remains behind the scenes for the user. Workers work with them, and they also contain all the basic information.

Now, let’s see what pools there are in the system.

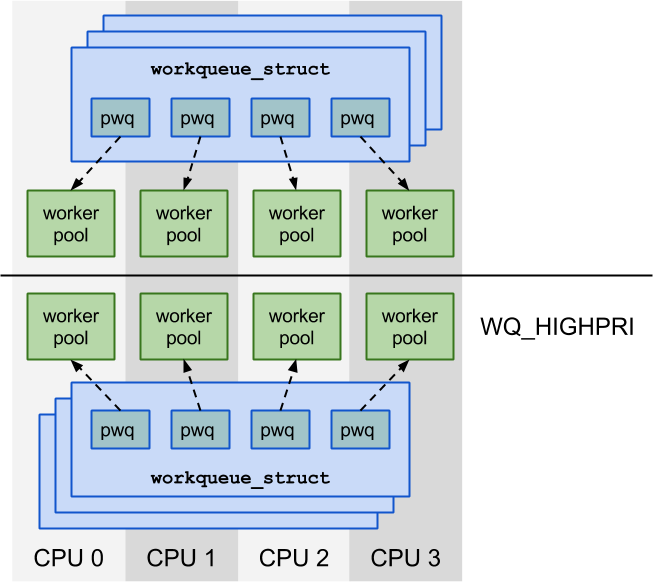

First of all, let’s take a look at pools for bound queues (at the picture). Two worker pools are allocated for each CPU: one for high priority works, the other one for works with normal priority. So, if we have four cores, there will be eight pools, despite the fact that there can be any number of workqueues.

When we create a workqueue, a service pool_workqueue (pwq) is allocated for each CPU. Each pool_workqueue is associated with a worker pool that is allocated on the same CPU and corresponds to the priority of the queue type. The worker pool interacts with the workqueue via them.

Workers perform works from the worker pool indiscriminately, no matter, which workqueue they originally belonged to.

Worker pools are allocated dynamically for unbound queues. We can divide all queues into equivalence classes according to their parameters. A worker pool is created for each such class. These are accessed by using a special hash table, in which the key is a special set of parameters, and the value is the worker pool.

In fact, unbound queues are a bit more complicated: while pwq and queues are created for each CPU, they’re created for each NUMA node here, but it’s the additional optimization that won’t be considered in details.

Some Trifles

For completeness, let’s take a look at several functions from the API:

/* Force Quit */

bool flush_work(struct work_struct *work);

bool flush_delayed_work(struct delayed_work *dwork);

/* Cancel the work execution */

bool cancel_work_sync(struct work_struct *work);

bool cancel_delayed_work(struct delayed_work *dwork);

bool cancel_delayed_work_sync(struct delayed_work *dwork);

/* Remove the queue */

void destroy_workqueue(struct workqueue_struct *wq);The Way Workers Do Their Job

Since we’ve got familiar with the API, let’s try to understand how all of it works.

Each pool has a set of workers that deal with tasks. At that, the number of workers changes dynamically, adjusting to the current situation.

As we know, workers are threads that execute works in the context of the core. The worker takes them one by one from the associated worker pool. As we already know, works can belong to different source queues.

Workers can be in three different logic states: they can be idling, running or managing.

A worker can idle and do nothing, for example, when all works are already running. When the worker enters this state, it falls asleep, and will not be executed till it’s awakened. If it is not required to manage the pool and the list of planned works is not empty, the worker begins to execute them. We will call such workers as running workers.

If it is required to manage the pool, the worker becomes the pool manager. Pools can have either one managing worker or none at all. Its task is to maintain the optimum number of workers per pool. How does it do this? First, it removes workers that have been idle for a while. Second, it creates new workers, in case the following three conditions are met:

- there are tasks to be performed (works in the pool);

- there are no idling workers;

- there are no running workers (i.e. active and not sleeping).

But there are some nuances in the last condition. If queues of the pool are not bound, this condition is always true for them. The same is true in case if the worker performs a task from a bound queue, but with the WQ_CPU_INTENSIVE flag. Since workers deal with works from the general pool (which is one of the two per each core at the picture above), in case if queues are bound, it turns out that some of them are accounted for as running, and some are not. From this it follows that performance of works from the WQ_CPU_INTENSIVE queue may start not immediately, but they do not prevent other works to perform. It should now be clear why this flag is called this way and why we use it when it is expected that works will run for a while.

Running workers «management» is performed directly from the main scheduler of the Linux kernel. This control mechanism ensures the optimum concurrency level, not allowing the workqueue to create too many workers, but not making works wait for too long either.

If interested, look at the worker’s function in the core, it’s called worker_thread().

You can learn more about the described functions and structures in include/linux/workqueue.h, kernel/workqueue.c and kernel/workqueue_internal.h files. You can also find the documentation about the workqueue in Documentation/workqueue.txt.

We should also note that the workqueue mechanism is used in the kernel not only for the deferred processing of interrupts (though this scenario is quite common).

Thus, we have reviewed mechanisms of the deferred processing of interrupts in the Linux kernel – tasklet and workqueue – that represent a special form of multitasking. You can read more about tasklets and workqueue in "Linux Device Drivers" by Jonathan Corbet, Greg Kroah-Hartman, Alessandro Rubini, but their information is a bit outdated.

You could also read “Linux Kernel Development” by Robert Love.

To Be Continued…

In the next part, I will tell you about the protothread and cooperative multitasking. I will also try to compare all of these seemingly different entities and extract some useful ideas.

0 comments

Upload image